ADF is the Application development framework developed by Oracle.. It is a framework developed on top of Java EE , used commonly in Oracle Fusion Application.. Oracle itself even uses it in its own Applications.

ADF applications is developed using Jdeveloper.

ADF and Jdeveloper products are usually equal in versions.. That is ; we use Jdeveloper 12c to develop ADF 12c applications.

ADF 12C renders the UI with the HTML5.. On the other hand; In ADF 11g there are still some components which are rendered using Flash..

If we are interested to develope Web Applications, we need to talk about ADF..

There is also another framework named MAF(Mobile Application Framework) and it used for developing mobile applications.. In MAF, HTML5 and Javascript technologies are used for rendering the UI components..

To develope ADF applications, we only need to have a Jdeveloper as an IDE.. We do all the things from design to deployment using Jdeveloper..

The developments in SOA and Webcenter are also done using Jdeveloper. For example UI for SOA is done using ADF.

ADF uses Model-View-Controller architecture.

When we are making developments in ADF, we usually work on XML files. We dont deal with java code so often..

ADF Business Components are the database access technology. It is based on the Object Relational Model like the other frameworks such as Hibernate, Sprink, Toplink etc..

With this model, in generally speaking; we declare our tables as java objects and access them it through thes defined objects.

We have more than 150 Faces components in ADF. Most of the faces components come with the Ajax support. ADF brings Ajax, XML and Javascript together to supply partial page rendering.. With partial page rendering, only the range which needs to be refresh is get refreshed..

In other words; not the whole page needs to be refreshed when there is a need to change something in a particular field..

ADF 11G components includes ADF Faces, ADF Taskflow, ADF Model/Binding, ADF Business Components and ADF Security..

ADF faces supply the UI with AJAX Support.

ADF taskflow supplies the mechanism to declare the flows and reusable webpages.

Model & Bindings binds the UI to Business Services.

Business Components provides the database access.

ADF security provides authentication and authorization services for our applications.

IN ADF taskflow, we can make method calls or routers ..

Login - > Dashboard - > Method Call -> Route - > commit

I -> return

Metadata Services (MDS) is used for customizations

We can build different pages according to the persons or sites.. A repository is needed for MDS. This repository can be file based.. Also it can be a database(db schemas are created using rcu), as well.

By using MDS, application customizations or user customizations can be done.. Design at Runtime can also be implemented. (for designing dashboards)

As I mentioned before; we use Jdeveloper to develop our applications..

The installation is very easy, next and next.. :)

After the installation we start our Jdeveloper and we start the built-in Weblogic server that comes with the installation using Run>Start Server Instance.. So we use Jdeveloper to manage the Weblogic Server that comes with the installation.. Afterwards, we can connect to the weblogic console using http://localhost:7010/console. Default admin user = weblogic , Default password= weblogic1

Jdeveloper's aim is to make us design our projects visually. We can use drag drop operations to add components to our projects. Jdeveloper supplies What You See is What you get kind of UI design. (WYSIWYG)

We can design our application visually, but also can go in to the code if we need to..

There are a lot of features in the IDE. For example , we can make dependency analysis or we can use contextual linking. (we can see the hierarchy by clicking on the source)

In addition We can make EJB(Enterprise Java Beans) modelling using Jdeveloper. Oracle Toplink can be used as well. Using XML, the data sources in the databases are mapped to the Java as java objects .

Note that : JSF 1.2 is supported in ADF 12C..

Also , in Jdeveloper, we can create web services easily, and we can run / test these webservices inside the Jdeveloper. We can even transform an ADF Business Component in to a Web service.

Note that: Web Services are declared using a language named WSTL.

Team development can also be implemented in Jdeveloper. We can use tools like CVS, Serena and Subversion inside the Jdeveloper to supply Team development.

Besides java, We can also do database development in Jdeveloper. Sql Developer comes as embedded to Jdeveloper.

Database Connection can be declared and the database object can be reaced using the Database Navigator of Jdeveloper. We have query builder feature in the IDE and we can even check the Explain plans of our queries.

The developments done using ADF, can be deployed to the application server as jar files. We can declare direct or DataSource based DB connections in Jdeveloper.. Naturally, there are advantages if using DataSource.. (like connection pooling)

In ADF security, besides pages, taskflows can also be maintained under the security mechanisms.

ADF Business Components generates events.. They are like bridges between our applications and Business Processes.

We can use Groovy scripting language to make elementary calculations (we can take the salary from the database and make add / divide /compare operations using groovy)

ADF faces includes a set of over 150 Ajax enabled JSF components for building richer WEB UI.

Dynamic and interactive applications can be developed using it. (Drag-drop, Ajax Enabled ,Complete JavaScript api , Partial page rendering etc..) It is in Web 2.0 standards.

Validation can be done in UI without going into the server.. So, the inputs entered from the UI can be controlled in the UI level. There is also listener logic.. When a button is pressed, an action listener works. There is graphs , Flash based components and also SVG rendering..

Besides, we have template logic in ADF. Templates can be created and used for every page. (For example: A template can be created and used in every page for writing the Copyright or for displaying login-logout buttons)

Normally, the pages are jsf in ADF., but they can be broken up into page fragments. These fragments can be used in several pages. This supplies the opportunity to spend less effor in development. For example: if we need to have a form in every page of our application, then we may build a page fragment which includes this form and reuse this fragment in several pages.

The logic behind the page regions is also similar. The primary reason to use the regions is reusing.. For example: we can build a workflow and put it into the page regions of several pages.

Another component for the reusability is Declarative components. For example: we can put our calendar , button etc.. in to a panel and then transform this panel in to a component to reuse in everywhere we want.

Okay, lets talk about the Controller layer in ADF. It is like a bridge between Moden and View. It provides a mechnasim to control the flow of the Web Application.

Lets explain this with an example:

When a button pressed; the controller determines the action that needs to be taken.. When a submit button is pressed, controller determines the action like saving the inputs entered and navigating to the next page.

When we open the Jdeveloper for the first time, it makes we to choose a role.. According to the role we choose, the capabilities of the IDE is determined. In Jdeveloper , there is planning capability. We can have checklist.. So , we can follow the project to see whether the datasource is created or business services are created.. We can follow this kind of activities with checking their statuses(started/in progress/not started)

This application overview is a capability of the IDE. Like tutorials , we have also a description/how-to for accomplishing the steps. There is also tutorial style information in Help.

Jdeveloper can deploy the projects into a remote WLS server, too.

In Jdeveloper ,we can preview the files without actually opening them.. We can do this even for images.

The ADF components can be accessed using the Component Palet of JDeveloper. Drag and Drop method can be used to put the component into the pages.

In resource palet, all te DB and Application connections can be seen.

In ADF, te object-table mapping are made through the Entity objects.

So EO objects tranforms the database into the code , and to make insert , update, delete against the Entity Objecs, View Objects need to be created.

View Objects supply read-only queries or a way to update the data in the database through the Entity objects. Note that: Readonly View Objects reach the database directly, not through the Entity objects.

When we update and commit a View Object, View object updates the cache of the Entity Object..So the Entitiy object makes the update.

Multiple View Objects can be created using a single Entity Object. View Objects also supply looking to the Entitry objects from different perspective.

View links can be created between the View Objects. In addition to that; associations can be created between Entity Objects. Thus the relationship inside the DB layer can be supplied using View Links and Associations.

Refactoring is another capability of Jdeveloper. When we rename an object , we can use refactoring.Using this refactoring functionality , we can change the object name everywhere in the project (where the renamed object is referred)(dependency)

The syncronization between Entity Objects and Database is done automatically. On the other hand, we need to make manual refreshes for the Wiew Objects. (Talking about the data here.. We need manual refres for DDL anyways)

There is also an option to create Joint View objects. That is , we can build one View Object using columns from several Entity Objects.

Another thing to consider is - > In Jdeveloper, it is easy to create Web Services.

We can add @WebService statement just above the class definition in the Java code, thus the webservice will be created automatically.

Also, we put @WebMethod statement just above the method definition.

Here , we will find an example for that

:https://docs.oracle.com/cd/E18941_01/tutorials/jdtut_11r2_52/jdtut_11r2_52_1.html

Okay. We can say that ; Oracle Applications & Enterprise Manager uses ADF faces, too.

Note that : We do not need to use ADF Business Components in order to use ADF faces. ADF Business Components is not a must for ADF Faces. We can build the UI using ADF FAces but can build we backend using a totally different thing than Business Components.

ADF comes with a strong Java Script API. Javascript play a big role in building rich context.

Javascript is a scripting language. It works on Client, and supplies doing some works on client without going to the server.

In ADF server, java scripts are transferred as compressed.

When we think of validation in ADF ; we can say that the first validation is done in the Browser.. ( For example whether the user put date into a date field)

In the backend , there is a central validation in the ADF BC components.

In the context of validation, we can display exceptions, rollback transactions and display default pages. Note that we can build our own custom exceptions for this.

Note that: In JSF there is no client side validation. On the other hand; in ADF there is client side validation using Javascripts.

When we talk about ADF Data Controls, we can list the following;

ADF Business Components.

Java Classes

EJB

URL

Web Services

Essbase

Place Holder

Our own..

Data Controls are the bridges between the source data soruce and User interface in ADF Web applications.

View objects resides in Data Controls. Also, we can build our own method and place in Data Controls. In other words, the objects that make us reach the data reside in Data Control.

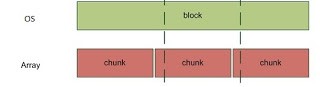

User Interface <--> Data Bindings <--> Data Controls <--> Business Service

Using Bindings, the UI components can be binded to the Data Control objects (For ex: when button pressed , do the commit)

Bindings for a page is stored in the PageDef.xml file.

Page.jspx -------------------------------- DataBindings.cpx-------------PageNameDef.xml

DataBindings.cpx : This file defines the Oracle ADF binding context for the entire application and provides the metadata from which the Oracle ADF binding objects are created at runtime.

PageNameDef.xml : . These XML files define the Oracle ADF binding container for each web page in the application. The binding container provides access to the bindings within the page. We will have one XML file for each databound web page.

When, we add a button to the form and using drap/drop we put the "commit action" in to the action listener of the button, we are done. It is the bind..

We can reach the bindings using Expression Language which is a java standard(EL).

#{bindings.Product.rangesize) =>

Note that : Product is view object and rangezise is the option of the Entity Object that the View Object related with. (for ex: Show me the rows in the Product table but in range 1-10)

or

actionlistener=#{ bindings.Commit.execute}

Okay. That's all for now.

I will continue to write about ADF .. Next ADF post will be through examples..